+86 15093323284 hams@ailunce.com

Why the Coax is 50 ohms?

If you play with the coaxial cable, you probably know it is available in a number of different impedances. but the most common is 50ohm coaxial cable for us to use for amateur radio.

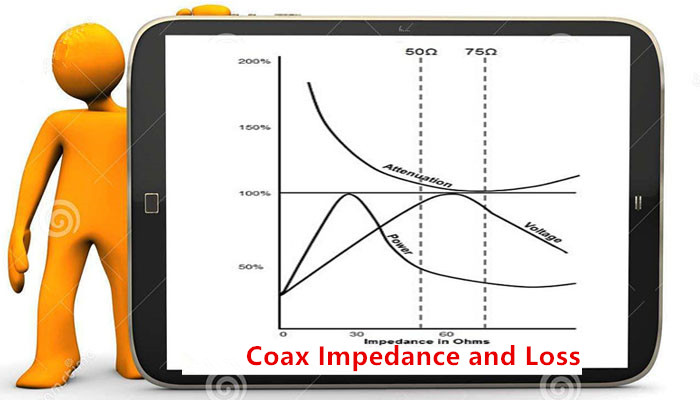

Do you know why is 50ohm, or any other number? The answer can be shown in the graph below.

This was produced by two researchers, Lloyd Espenscheid and Herman Eiffel, working for Bell Labs in 1929. They were going to send RF signals (4 MHz) for hundred of miles carrying a thousand telephone calls. They needed a cable that would carry high voltage&high power. In the graph below, you can see the ideal rating for each. For high voltage, the perfect impedance is 60 ohms. For high power, the perfect impedance is 30 ohms.

This means, clearly, that there is NO perfect impedance to do both. What they ended up with was a compromise number, and that number was 50 ohms.

You will note that 50 is closer to 60 than it is to 30, and that is because the voltage is the factor that will kill your cable. Just ask any transmitter engineer. They talk about VSWR, voltage standing wave ratio, all the time. If their coax blows up, it is the voltage that is the culprit.

So why not 60 ohms? Just look at the power handling at 60 ohms - below 50%. It is horrible! At the compromise value of 50 ohms, the power has improved a little. So 50-ohm cables are intended to be used to carry power and voltage, like the output of a transmitter.

Still, I get a lot of feedback from people who use 50 ohms for small signals; you can see above that they are taking a 2-3 dB hit in attenuation. Excuses I hear are “It's too late to change now!” or “That's the impedance of the box itself.” This is especially true of most test gear, which is universally 50 ohms. You have to buy a matching network to use it at 75 ohms or any other impedance. But there are lots of applications where 50 ohms is the best choice.